Validation feedback can provide important social affirmation

Posted by dekstop on 8 February 2017 in English. Last updated on 27 March 2017.After my talk at State of the Map in Brussels, Nick Allen asked: are newcomers to HOT more likely to be retained if we give them positive validation feedback? And conversely, do we discourage them if we invalidate their work? I had no answer at the time, in part because many validation interactions are not public. However, I agreed with his observation that these are likely important early encounters, and that we should make an effort to understand them better. In particular, we should be able to provide basic guidance to validators, based on empirical observations of past outcomes. What are the elements of impactful feedback?

I spoke to Tyler Radford about these concerns that same day, and within a few days we signed an agreement which gives me permission to look at the data, provided I do not share any personal information. The full write-up of the resulting research is now going through peer review, and I will share it when that’s done. In the meantime, I thought I should publish some preliminary findings.

Manually labelling 1,300 messages…

I spent the next months diving into the data, reviewing 1,300 validation messages that have been sent to first-time mappers. I labelled the content of each message using models from motivational psychology, and feedback in education settings. For now I’ll skip a detailed discussion of the details, but feel free to ask questions in the comments.

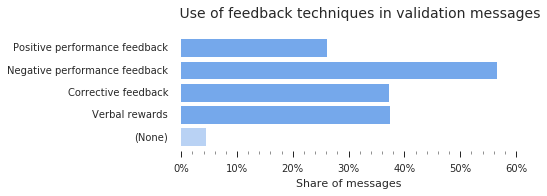

I assessed the impact of different kinds of newcomer feedback:

- Positive performance feedback: messages including comments like “good job”, “great work”, “looks good”, …

- Negative performance feedback: “doesn’t look complete”, “missing tags”, “needs improvement”, …

- Corrective feedback: guidance about specific improvements to improve future work, including links to documentation.

- Verbal rewards: messages containing positive performance feedback, gratitude (“thanks!”), or encouragement (“keep mapping”).

Here’s a chart of the frequency of each type of feedback across the messages I labelled:

To measure the effect of these feedback types, I collected the contributions for each newcomer over a 45-day period after their initial edit, and labelled the content of the first feedback message they received during this time. I then observed for how many days they remained active, or whether they dropped out (as measured with an additional 45-day period of inactivity). I then used a Cox proportional hazards model to explain the retention rates we observed, based on a set of features and control variables. This is comparable to a regression analysis, but specifically intended to model participant “survival”. In the context of this study, the term `hazard’ is a synonym for the risk of abandoning HOT participation. A hazards model yields a hazard rate (or rate of risk) for each contributing factor, denoting the relative increase in hazard when a particular feature is present. For example, a hazard rate of 2.0 means that the person is twice as likely to stop contributing within the observation period, compared to the average. Conversely, a low hazard rate of 0.5 means they are twice as likely to still remain active at the end of the observation period.

Social affirmation matters: someone else cares

Maybe most importantly, I found that the feedback can be an important source of social affirmation, which in turn can improve newcomer retention. This effect is most clear among newcomers who contributed comparatively less on their first day (mapping less that the median of 75 minutes), possibly because they have low intrinsic motivation or self-efficacy. Among these, people who received verbal rewards in their first feedback message were significantly more likely to keep mapping, at a reduction of the hazard rate to 80%. In comparison, newcomers who already start with a high degree of engagement may not require such affective-supportive feedback to remain engaged.

This makes sense when you consider the wider context. The process of contributing to HOT online can be considered a depersonalised form of interaction: it is often focused on the task, rather than the learner. In the absence of other prominent social cues, small phrases of support may have a large effect. In the case of validation feedback, it’s likely also important that this is not simply an automated message. Instead, someone else looked over your work and then took the effort to write some kind words.

To my surprise, negative performance feedback in itself is not necessarily discouraging to newcomers: while it may demotivate some individuals, in aggregate across all newcomers there was no significant effect on retention. This includes instances of invalidated tasks, and negative performance feedback such as “your buildings are all untagged”. This may be because the feedback is private: people don’t have to be concerned about the impact on their reputation, and can focus on improving their skills. In communities like Wikipedia where feedback tends to be public (in the form of comments or reversions), it was found that negative feedback can harm newcomer retention. It’s also worth mentioning that even “negative” feedback in HOT still tends to be polite and constructive: HOT validators are generally a very polite bunch, based on the messages I’ve seen. They might simply point out that you forgot to square your buildings.

The timing of feedback matters: feedback that was sent within 28 hours or less (the median delay) yielded a reduction of the hazard rate to 80%. Any additional day of delay increased the hazard rate. This means that feedback that was sent after a week or later tended to have less of an impact. However, please regard this outcome with some suspicion. It likely has to do with how feedback is sent: the current tasking manager cannot send email alerts, instead people need to return on their own accord to see the message. I expect that we might see quite different behaviour once we start sending proper email notifications in a future iteration of the tasking manager. We might even observe that validation feedback can become an effective way to reactivate dormant mappers… I’m curious.

I now believe that these observations place validators at the core of the HOT community: for many contributors who can’t attend a mapathon, and who haven’t subscribed to the mailing list or joined IRC, validation feedback is their first experience of a social encounter. For a number of reasons, the current iteration of the tasking manager doesn’t easily support such interactions (maybe a topic for a future post); but I’m looking forward to the next iteration, which is already in planning. As I’ve learned through discussions, the validator community already has some great ideas about improving it even further.

The fine print

First off, this is an observational study, which comes with some constraints: we can identify links between validation styles and outcomes, and control for confounding factors through careful model design, which gives us some confidence in the findings. However, we would have to run actual experiments to confirm each link.

The models behind these findings account for a number of confounding factors. For example, I consider each newcomer’s initial contribution activity: were they already enthusiastic contributors to begin with? I also look at the particular project they start with: did they join during a disaster campaign, possibly in a wave of public interest? Such newcomers tend to not stick around for long.

And my usual caveat applies: I assessed the impact on contributor activity and retention, but not on contribution quality. In part because I still haven’t found a good approach to assessing contribution quality at this scale: there is no ground truth available for comparisons, and contribution practices are diverse and often specific to the geographic/thematic context. Developing methods to assess data quality at this scale is a research project in its own right.

This is certainly not the final word on validation feedback, and I expect many others will add to this (maybe in the comments)? But it can hopefully serve as one contribution to our growing body of knowledge about how best to support our maturing community.

Discussion

Comment from SeleneYang on 8 February 2017 at 00:51

From #GeoChicas, we’re trying to observe the ways in which we can potentially bring in more girls to the OSM community, and also see how their sense of belonging in the community is constructed in relation to the sociability within our peers. I really enjoyed reading this entry. Thank you :3

Comment from Jennings Anderson on 8 February 2017 at 00:52

Very interesting work, Martin! I look forward to seeing the full write-up, best of luck with the review process.

Comment from SeleneYang on 8 February 2017 at 00:53

P.S #GeoChicas is a Latam initiative from a group of women who got together in SOTM Latam last year in Sao Paulo to talk about closing the gender gap in the community :D

Comment from dekstop on 8 February 2017 at 00:58

Amazing, Selene! I’m very happy to hear about your initiative. And also Jennings – thanks for your kind words!

Comment from mapeadora on 8 February 2017 at 01:48

Hi, very interesting post! I think the reaction of mappers with feedback varies a lot and totally depends on the mapper profile, type/style, his motivation, and his relation with personal validation need. That confirms to me the importance of studying this last part. Would be interesting a gender-based analysis of these reactions too, and how the general tone of the feedback influences the participation of women. #GeoChicas

Comment from dekstop on 8 February 2017 at 02:03

mapeadora, thanks for your comment! I definitely agree – while large-scale studies can reveal some general tendencies, in the end these are very personal matters, and everyone will have their own curiosities and sensitivities, and respond to feedback in their own way. I also find it significant that you’re not the first person to mention the question of gender in this setting to me; there is a universe of social and contextual considerations worth exploring. However, the OSM edit history provides no information about the demographics of mappers… I hope other researchers will take on such questions, possibly using ethnographic methods.

Comment from nsmith on 8 February 2017 at 11:10

Great work Martin, and nice summary of the results. Super interesting to see numbers put to the idea that feedback matters. It’s also helpful for us as a community to look at this both from a technical perspective (development on the new TM), as well as, from a community perspective (the culture of how we give feedback).

Comment from tyr_asd on 8 February 2017 at 12:13

Can you please explain (to a non-statistician) what that means exactly? For example: Does it mean mappers who received feedback, were ~20% more likely to continue mapping compared to those who weren’t messaged?

Comment from RebeccaF on 8 February 2017 at 14:56

A very interesting read, thank you for sharing! I’m quite surprised at the findings. It would be interested to know something on how quickly the validation feedback was given. e.g. if the feedback is given 6 weeks after the mapping, negative feedback might have less of an impact than if it were given same day/week. Might not be within your scope - just a thought :)

Comment from dekstop on 8 February 2017 at 15:32

Thanks Nate! Yes I thought I should start sharing these before the TM3 team starts committing to specific goals…

tyr_asd: ah, a very good point. I added a paragraph to the end of the labelling section to explain the statistical method. Please let me know if it’s still unclear; I guess I initially left it out because it’s quite hard to avoid using overly technical language when explaining it. (Maybe someone else has a better way of expressing it?)

RebeccaF: Thank you! Yes, I don’t go into the details here, but I found that the effect of feedback diminishes with each additional day of delay; this is independent of the kind of feedback that is given. Luckily, these days most feedback arrives quickly: as I mention above, 50% of all feedback was given within 28 hours. I expect that with our maturing validator community, this will get even quicker over time. Please let me know if that didn’t clarify it!

Comment from tyr_asd on 8 February 2017 at 15:43

@dekstop: Thanks for the additional explanation! It’s much clearer now.

Comment from andrewwiseman on 8 February 2017 at 23:26

This is really interesting, thanks Martin! Maybe I missed it but is there a way to tell if people read the feedback? There might be messages the validator sends but the user never logs in again and so doesn’t see them, which might (or might not) skew results.

Comment from andrewwiseman on 8 February 2017 at 23:29

And are these messages from the @ messages when you mark a task as validated or invalidated? Such as “@johndoe thanks for mapping” ! Then johndoe gets a ping.

Comment from Tallguy on 9 February 2017 at 00:50

Martin, Thank you for all your work on this - I don’t envy you reading and categorizing all those messages! For me, I think the biggest surprise is that even negative feedback is a sort of ‘positive’. The important message seems to be, there needs to be a message! Keep up the good work - it’s really great to have such a professional assessment. Regards Nick

Comment from BushmanK on 9 February 2017 at 04:31

@Tallguy, actually, it is not that surprising. It only shows that people, who received a negative feedback, are socially mature individuals interested in improving their work. Reasonable negative feedback is a perfectly normal part of the social interaction that serves to improvement by providing an information on what exactly should be improved.

But that brings up a question of motivation. OSM contributors have a very different motivation, from “making the best map of the world” to socializing while doing some mapping. Exact reaction on different kinds of feedback should be varying depending on that because feedback likely means different things to these people. For those who interested in socializing, feedback is important as an affirmation. Others, who want to learn how to make the best map, it’s a valuable information about things to improve.

And this raises the question: how exactly this analysis is titled? Because if it refers to HOT - it is perfectly okay since it is based on data collected from communications within HOT. But if it refers to the whole OSM, it could mean an unreasonable extrapolation and/or selection bias. At least because the motivation of HOT participants could be different and that difference has not been studied scientifically.

Comment from andrewwiseman on 9 February 2017 at 22:01

@bushmank I would assume this is referring to Tasking Manager messages, so thus would be more related to HOT. I don’t know for sure though.

Comment from BushmanK on 9 February 2017 at 22:09

@Marion Barry, it is absolutely clear from the diary entry that communications within HOT were a material for this study. Question is, what exactly this study claims.

Comment from tekim on 9 February 2017 at 23:19

Very interesting study Martin. However, while I believe it is important to give positive, and if necessary nice corrective, feedback (just because it is the right thing to do), I am surprised there was a strong correlation between the type of feedback and subsequent mapper behavior. My experience has been that new mappers don’t read their TM messages, either because they never log back into the TM after their first edit session, or they ignore the notice that they have messages in their inbox. Is it possible that the mapper behavior is influencing the type of feedback as opposed to the other way around? In other words, mappers that from the start “get it”, do a good job mapping and therefore get generally positive feedback. Because these mappers “get it”, they enjoy the activity, and are more likely to continue to contribute (who wants to continue to do something they are not good at).

Comment from mapeadora on 10 February 2017 at 00:21

Comment for Tekim. Actually, you can receive (I think it is by default) the messages to your email, so you can read them as a new mapper. I am not an intensive mapper, because I focus more effort to build community than mapping. So, I can be considered as a beginner, in the sense that I am sensitive to messages from the community. And I can testify that I have felt several times unmotivated by messages, very exigent, on the quality of my draws and tagging, unable to detect terraces on building and this type of things, or drawing with not enough precision, on bad imagery in an emergency context (but messages were out of HOT platform, time after the TM). I can say that yes, it is a factor that has negatively determined my practice of mapping. Of course everybody reacts differently. I don’t consider I didn’t “get it”, but think that the sense of community and positive support have a variable role according to the types of mappers. Secondly, as an active promoter of OSM, in the trainings we give to students in Mexico, we decide to value the local knowledge above the technical abilities. In a second phase, new mappers learn from the exigence of the community, but it works better if expressed in a positive tone.

Comment from tekim on 10 February 2017 at 01:12

@mapeadora Interesting about receiving HOT TM messages in ones email. I didn’t think this was possible. It would be great if it was! Perhaps you mean messages sent through the OSM website (e.g. change set comments)?

I agree with you that the wrong type of feedback can be very demotivating. I have been the recipient of that and felt hurt. When validating I always try to give positive feedback and nice corrective pointers. My point was that if people are not reading their HOT TM messages - and I don’t think data is available as to whether they are or not - there can be no causation, only correlation. I suspect, and this is only a suspicion, is that if we could only looked at cases where messages were read, there would be a much larger negative impact from negative comments.

Local knowledge is very important. I didn’t mean to imply that it was not. My point was that if a new mapper did happen to to either have some existing mapping skills, or was able to quickly pick them up, they would be both more likely to get positive feedback, as well as continue as a mapper. In other words the common causation of both the positive feedback and the mapper returning is the initial or early skill.

Comment from Jorieke V on 11 February 2017 at 17:48

Hi Martin, great analysis again! And heads up to our validators, keep on going! I was wondering what the effect is of combining the different types of feedback in a message to the mapper. I can imagine that a combined message of negative performance feedback and verbal rewards will have higher effect, than only the negative feedback… or not?

Comment from TylerOSM on 13 February 2017 at 23:32

Martin, as always, a very interesting study with some important lessons for us to consider as a community. I for one am looking forward to more, better, and more timely feedback loops (and other forms of interaction among mappers-validators) in the next version of the Tasking Manager.

Comment from dekstop on 15 February 2017 at 15:31

Thanks all for the kind words! Many apologies for the delays, as you may have guessed I’m currently swamped with misc commitments. I’m collecting all feedback in preparation for a further analysis pass sometime in March, so all your suggestions are much appreciated. I’m particularly interested in your suggestions about how to safeguard the analysis: for an observational study like this, it’s important to measure carefully, and to take into account any confounding factors that may introduce a systemic bias in outcomes.

Marion Barry: I’m including both automated messages (required when rejecting a task) and manual @mentions, but only consider messages that have been sent by a validating user at the time of validation. I ‘m not currently considering whether people actually read the message, but agree that it would be a good addition; it can increase our confidence in the causal link.

Tallguy – I agree, this seems to be a good summary: “there needs to be a message”.

BushmanK: yes, this is about HOT contributors only, I do not observe OSM activity outside of HOT. I edited the intro to make it clear that I’m talking about HOT. It’s feasible that the OSM community might operate quite differently; it’s also likely a much more complex space than HOT.

tekim – correct, I don’t go into detail in the short writeup above, but I see that many mappers don’t return after their first contribution, and likely never see any messages. As you suggest, this introduces a potential for confounding factors, which I try to address by introducing a set of carefully selected control variables that can serve as broad proxies for a propensity to remain engaged. Please let me know if you have ideas for other factors to control for. However, this is still only an observational study, and there is a risk that we identify spurious causes. We would have to run experiments to confirm these relationships.

mapeadora – interesting, thanks for these examples! I appreciate when people share such stories, because currently I don’t think many people have reflected very deeply about the contributor experience from this side. Everyone’s experiences will be different, but the sharing of personal experiences helps a lot to make our understanding of the process more concrete.

tekim – I’ve seen some validators use OSM direct messages in addition to TM messages; unfortunately I don’t have access to OSM DMs, so can’t include them in the analysis.

Jorieke – intriguing thought! There definitely are different “styles” of validation, but so far I’ve not compared their impact; in part because we might not yet have enough observational data available for such a fine-grained analysis. Maybe in another 1-2 years… :)

Thanks Tyler! I’m looking forward to the next TM as well :) The design discussions around it are definitely moving in the right direction!