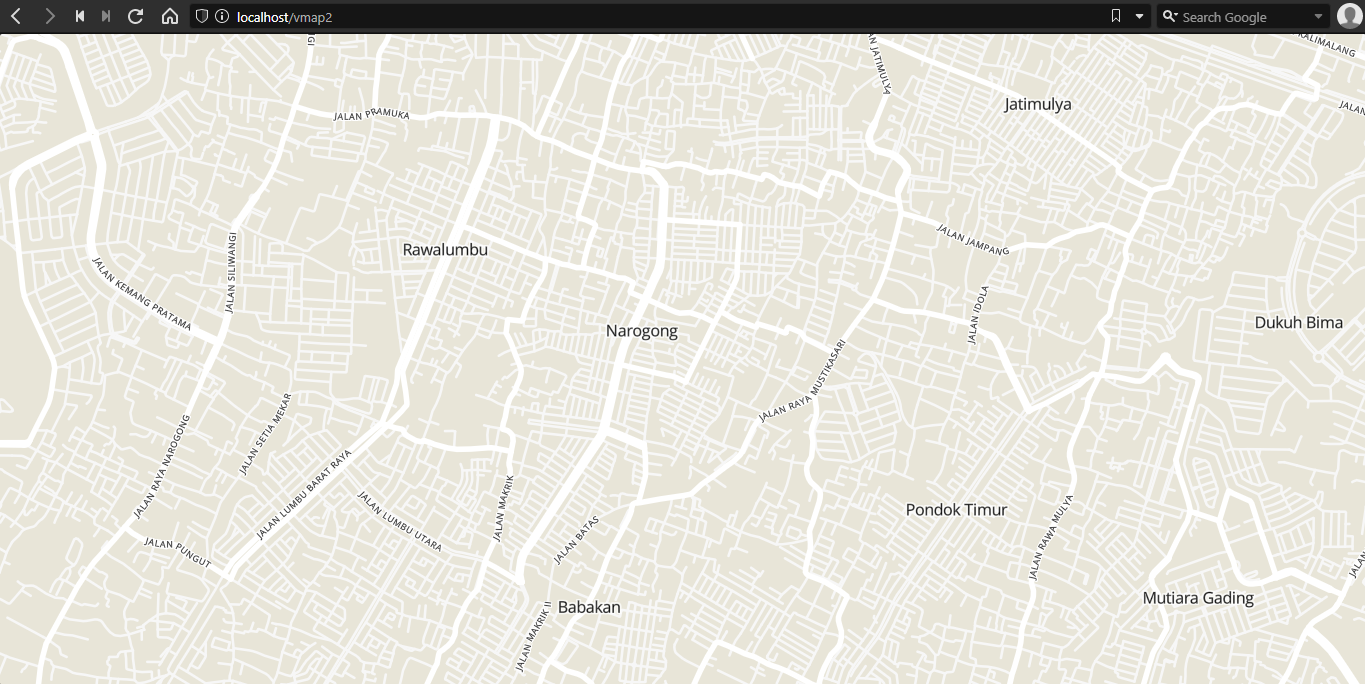

Inspired by this video, i want to make my own self-hosted vector tiles.

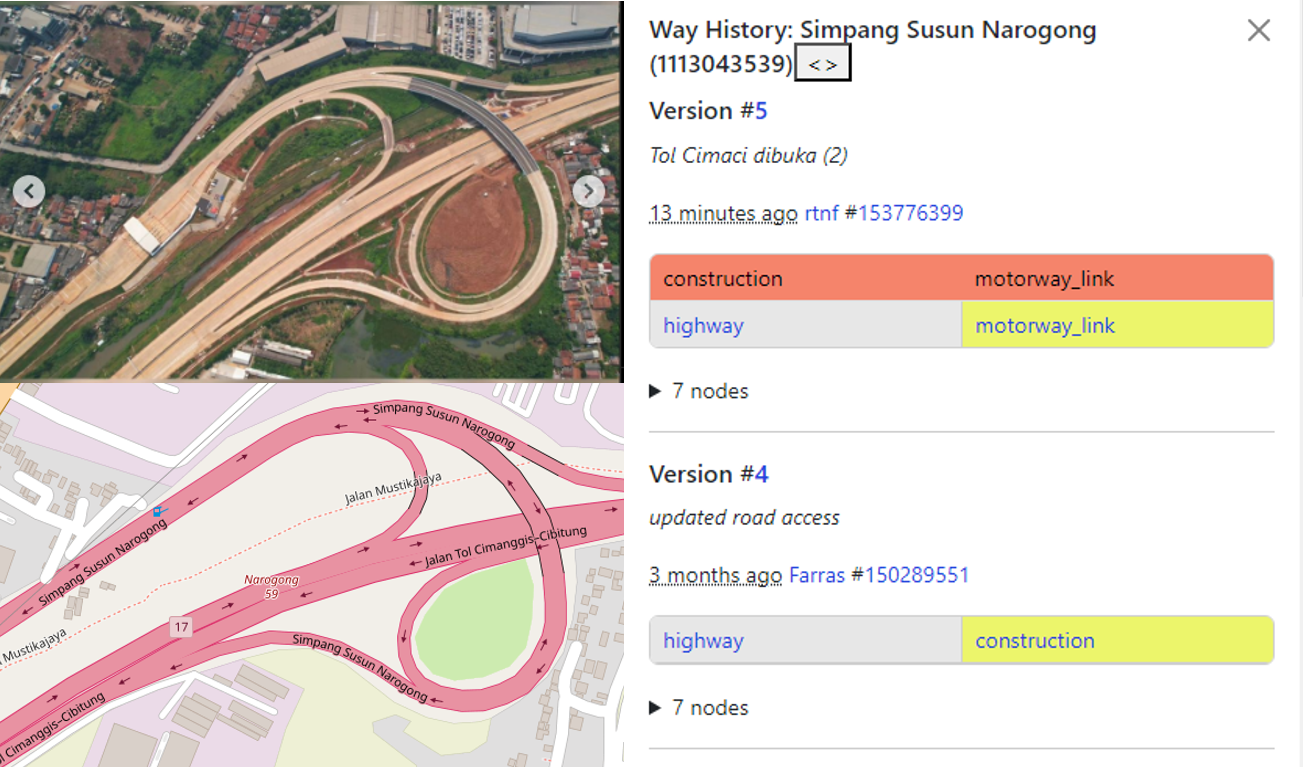

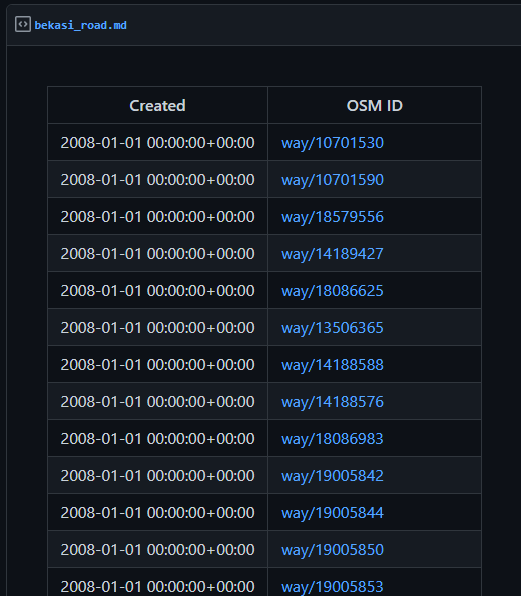

First, prepare several geojson file by using JOSM. Each geojson file will serve as a “layer”. We can specify the style for each layer. I made three layer (mainroad, suburb, jalan_rest), with this specification

- mainroad :

highway= (secondary | primary | trunk | tertiary)

- suburb :

place=suburb

- jalan_rest :

highway= (* && not secondary && not primary && not trunk && not tertiary)

Use JOSM, create overpass query, save as .geojson, repeat.

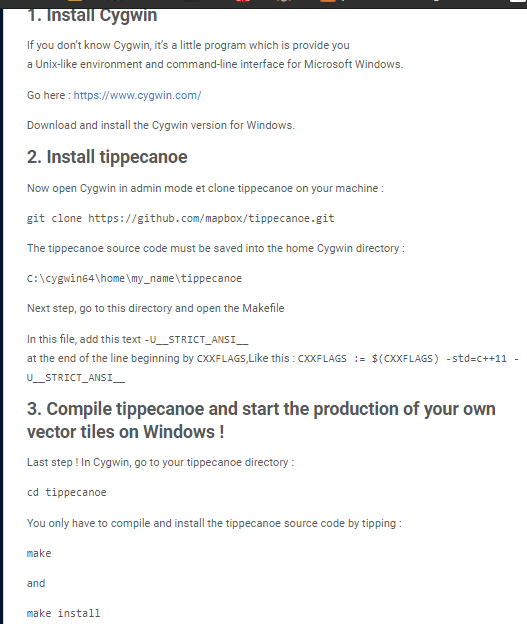

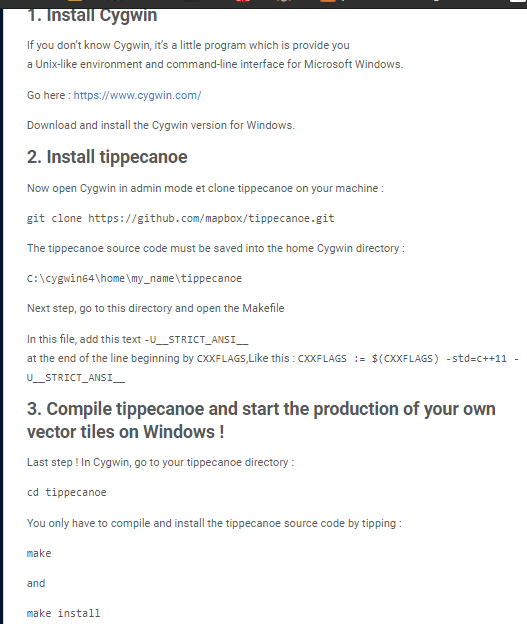

Second, convert these geojson file to mbtile format by using tippecanoe. Installing tippecanoe on MacOS / Linux is pretty straightforward. But, installing on Windows needs a quick-hack. I followed this guide , it works.

Then combine all those geojson file into one mbtile file by using tippecanoe.

Then, convert that mbtile file to pmtile by using go-pmtiles

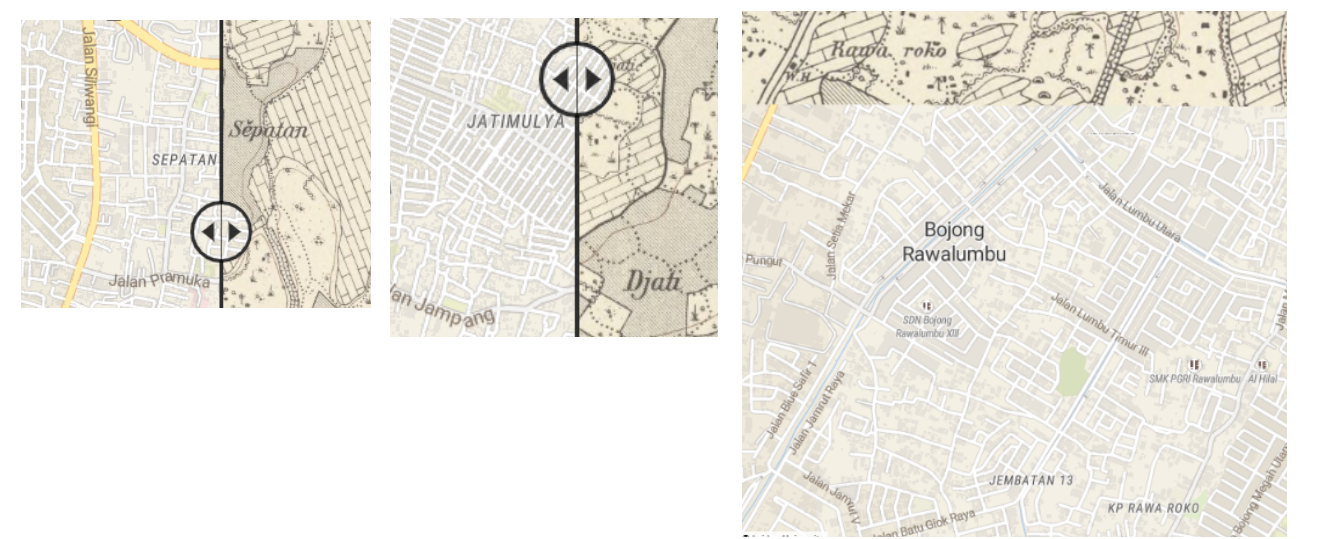

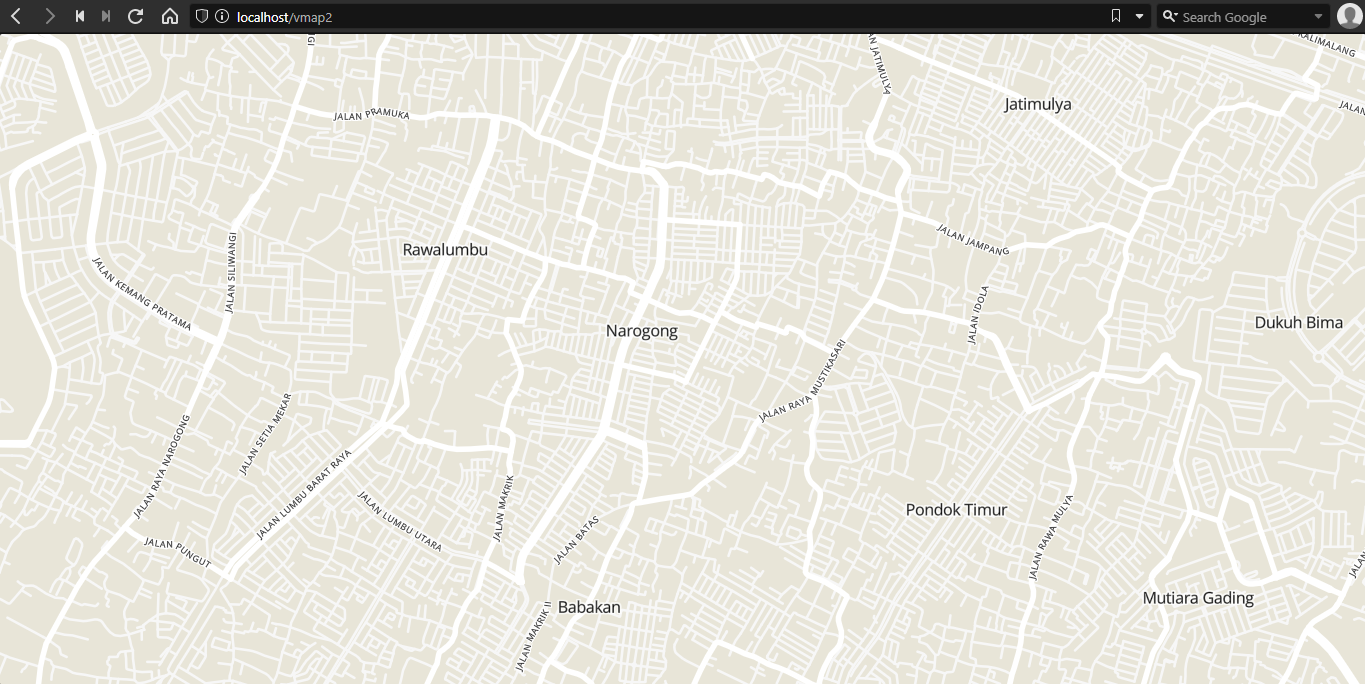

Now, let’s display that mbtile and do some styling.

Index.html, first, let’s import maplibre-gl and pmtiles javascript library.

<script src='maplibre-gl.js'></script>

<link href='maplibre-gl.css' rel='stylesheet' />

<script src="pmtiles-2.5.0.js"></script>

Then, define the map

let protocol = new pmtiles.Protocol();

maplibregl.addProtocol("pmtiles",protocol.tile);

console.log(maplibregl)

var map = new maplibregl.Map({

container: 'map',

style: 'styles/maptiler-basic.json',

center: [106.99811303126697,-6.295502009348816],

zoom: 11

});

The rest of the configurations are stored on that “maptiler-basic.json”.

Let’s configure the pmtiles file

"sources": {

"openmaptiles": {

"type": "vector",

"url": "pmtiles://bks2.pmtiles"

}

}

Then, configure the fonts file

"glyphs": "fonts-gh-pages/{fontstack}/{range}.pbf"

Finally, configure the actual map style. Match the “layer” from tippecanoe’s output to “source-layer” tag.

Mainroad layer style :

{

"id": "road_major_motorway",

"type": "line",

"source": "openmaptiles",

"source-layer": "mainroad",

"layout": {"line-cap": "round", "line-join": "round"},

"paint": {

"line-color": "hsl(0, 0%, 100%)",

"line-offset": 0,

"line-width": {"base": 1.4, "stops": [[8, 1], [16, 10]]}

}

}

Suburb layer style

{

"id": "place_label_city",

"type": "symbol",

"source": "openmaptiles",

"source-layer": "suburb",

"maxzoom": 16,

"layout": {

"text-field": "{name}",

"text-font": ["Open Sans Regular"],

"text-max-width": 10,

"text-size": {"stops": [[3, 12], [8, 16]]}

},

"paint": {

"text-color": "hsl(0, 0%, 0%)",

"text-halo-blur": 0,

"text-halo-color": "hsla(0, 0%, 100%, 0.75)",

"text-halo-width": 2

}

}

Jalan-rest layer style

{

"id": "road_minor",

"type": "line",

"source": "openmaptiles",

"source-layer": "jalan_rest",

"minzoom": 13,

"layout": {"line-cap": "round", "line-join": "round"},

"paint": {

"line-color": "hsl(0, 0%, 97%)",

"line-width": {"base": 1.55, "stops": [[4, 0.25], [20, 30]]}

}

}

Road label configuration

{

"id": "road_major_label",

"type": "symbol",

"source": "openmaptiles",

"source-layer": "mainroad",

"minzoom": 13,

"layout": {

"symbol-placement": "line",

"text-field": "{name}",

"text-font": ["Open Sans Regular"],

"text-letter-spacing": 0.1,

"text-rotation-alignment": "map",

"text-size": {"base": 1.4, "stops": [[10, 8], [20, 14]]},

"text-transform": "uppercase",

"visibility": "visible"

},

"paint": {

"text-color": "#000",

"text-halo-color": "hsl(0, 0%, 100%)",

"text-halo-width": 2

}

},

{

"id": "road_minor_label",

"type": "symbol",

"source": "openmaptiles",

"source-layer": "jalan_rest",

"minzoom": 13,

"layout": {

"symbol-placement": "line",

"text-field": "{name}",

"text-font": ["Open Sans Regular"],

"text-letter-spacing": 0.1,

"text-rotation-alignment": "map",

"text-size": {"base": 1.4, "stops": [[10, 8], [20, 14]]},

"text-transform": "uppercase",

"visibility": "visible"

},

"paint": {

"text-color": "#000",

"text-halo-color": "hsl(0, 0%, 100%)",

"text-halo-width": 2

}

}

Done!