Easy screenshot updates (useful in documentation, especially tutorials)

Posted by Mateusz Konieczny on 9 July 2018 in English.During making documentation of OSM tools and software it is frequently useful to add screenshots. Especially tutorials are often featuring multiple ones. I always disliked that such images are frequently getting outdated. It may caused by interface changes, map style changes and edits to OSM data.

Sometimes it requires more significant update of documentation, sometimes updating images is enough. But it may be very time-consuming.

When I was making my Overpass Turbo Tutorial for newbies* I wanted to find a way to make such images easy to recreate.

I found an interesting solution. Selenium is a software usually used for testing websites. It has plenty of features but interesting here is is that one may write a simple script that will

- open a website

- wait for it to load

- take a screeenshot

This way it may be possible to generate and recreate screenshots without manually taking them.

There is plenty of way how Selenium may be used, I am using Python bindings connected to Firefox using geckodriver.

Time-saving code is relatively simple.

imports:

from selenium import webdriver

import time

from selenium.webdriver.common.by import By

from selenium.webdriver.support.ui import WebDriverWait

from selenium.webdriver.support import expected_conditions as EC

Lets say that I wanted an example of a simple website. This code will take a picture of googling results and save it to a specified file.

driver.get('https://duckduckgo.com/?q=wetland+OSM+Wiki&ia=web')

driver.save_screenshot('wetland-search-results.png')

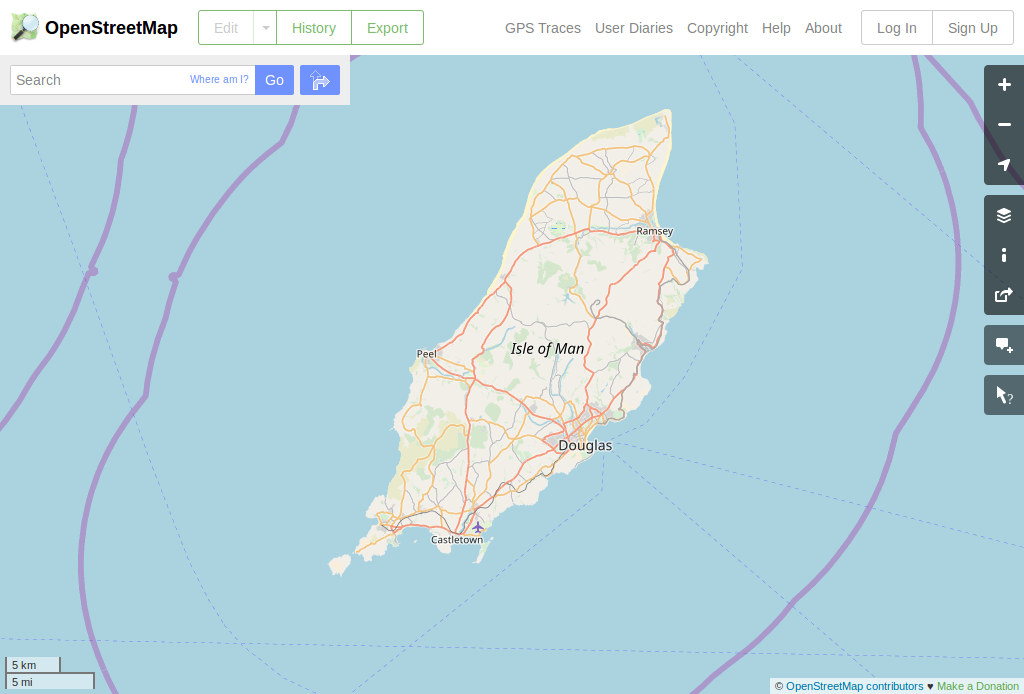

Following code sample will modify vieport size and interact with page to hide irritating banners, then take a picture of site (note that such use is also subject of Tile Usage Policy like other automated tile downloading, but for regular tutorial updates use will be not higher than human doing the same):

driver.set_window_size(1024, 768)

driver.get('https://www.openstreetmap.org/#map=10/54.2066/-4.5782')

#hide banners about SOtM and other spam

for popup in driver.find_elements_by_class_name("close-wrap"):

popup.click()

driver.save_screenshot('Isle-of-Man.png')

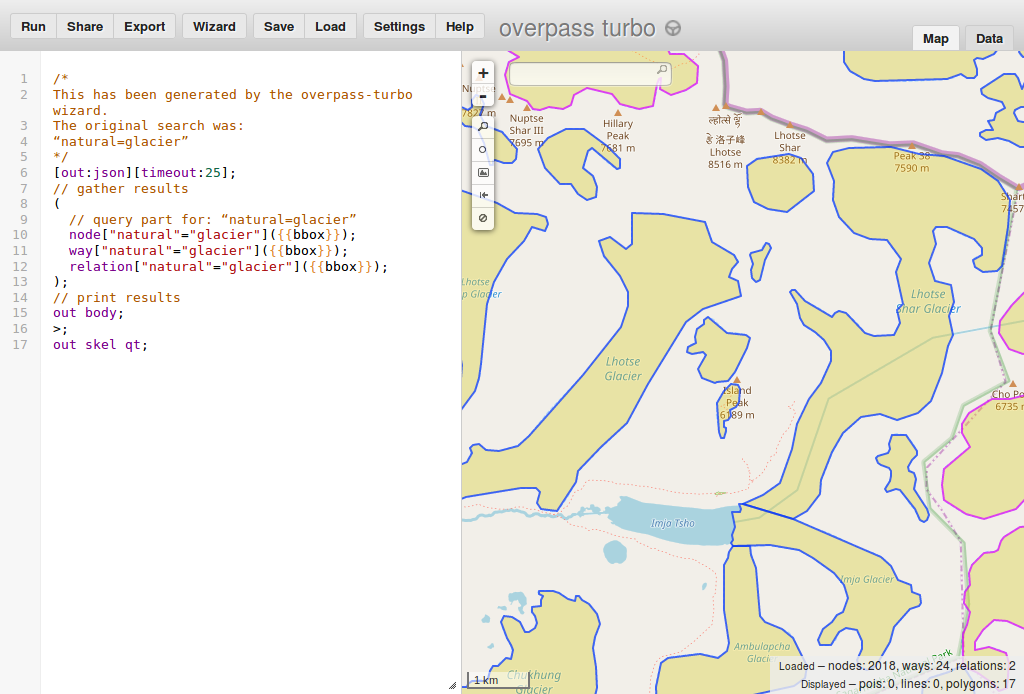

overpass turbo screenshots are a bit trickier when we want to display query results

def smart_overpass_capture(url, image_file, driver):

driver.get(url)

element = WebDriverWait(driver, 10).until_not(

EC.presence_of_element_located((By.CLASS_NAME, "loading"))

)

driver.save_screenshot(image_file)

smart_overpass_capture('http://overpass-turbo.eu/s/zNL', "Nepal-glaciers.png", driver)

Overall it turned out to be much easier than expected, and now to update all images I need to run just a python script rather than fiddle with pixel alignment and crop images to an exact size.

One nice part is to add proper image comparison to git - I used method described at http://www.akikoskinen.info/image-diffs-with-git/. It is not ideal, but it is good enough for me.

I still have to figure out how to automate generation of animated images showing usage of interface. This would allow to easily tweak and improve such animations without manually recreating everything.

*if you have time or are interested in how on may extract data from Overpass Turbo - feel free to write to me how it may be improved (by OSM message or by comments of this entry).

Discussion

Comment from alexkemp on 10 July 2018 at 10:57

Caution advised!

Whilst (as best I can tell) Mateusz does not suggest using the above methods live on a web-page, or indeed live on a web-server, this is the kind of thing that may prompt others to embed it in their application. Now, if your desire is to crash the 3rd-party website, then that is the perfect way to do so.

The way to avoid such an action is to do 2 things:–

cronjob to obtain the necessary image/whateverIt is unlikely that any 3rd-party website will object to you performing a daily cron-access.

Just in case the point has not got home, this happened (and occasionally re-occurs) on StopForumSpam (SFS). This is the sequence of events:–

Comment from Mateusz Konieczny on 10 July 2018 at 20:42

Yes, it is code intended to be run manually on updating documentation, for example once a year.

I thought about potential for misuse, that is why I mentioned Tile Usage Policy but I was not considering that someone may use such script automatically (on, for example each page load) rather than manually.

Comment from alexkemp on 10 July 2018 at 20:58

Hi Mateusz Konieczny

Sure; I trust that it was very clear that there was zero issue from me towards yourself on that basis. However, SFS never thought that anyone could be so inept, or indeed brain-dead, as to write a plugin that would offer to access the SFS API on every user access. Yet sure enough, that is what happened (in addition to freelance efforts).

Hundreds of thousands of users access SFS each day, so you can imagine the effects of those additional web-servers multiplied by each user to those web-servers. A true DDOS.